Interactive Environments

For SCI_Arc's Open Studio 2026 I didn't want to present the same projects everyone else was showing. As my next semester was based on Unreal Engine for rendering, I decided to learn the hard way by creating a challenging project to show at open studio. My idea was to use the same base architecure as my Wicked Puppies computer vision system to put the visitors to Open Studio in Unreal Engine in real time as Pac-Man.

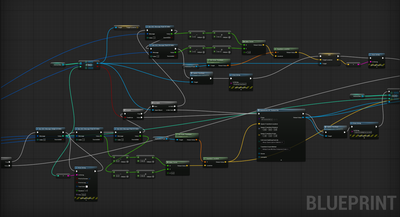

The Python system was rebuilt around path tracing to dynamically assign IDs to each visitor, create a matrix of every ID, and calculate the location using Homography Mapping. This data is then broadcast via an OSC server to Unreal Engine, which decodes this matrix. Lastly, the locations are calibrated inside a custom Gaussian Splat of the South Gallery at SCI_Arc where Open Studio was held.

Process Images.

Gaussian Splat of South Gallery

This project became a lesson in data management, and I have learned a lot about how to structure data across translation layers. One of the key issues I had was multi-user detection in Unreal due to it's high FPS and limited framerate of my camera, so multiple IDs would be sent in a row, leading to confusion and breaking of communication. After debugging and realizing Unreal's limitations, that is when I switched to a Matrix system.